AI-Generated Art: The Promise and Peril of Stable Diffusion

Artificial Intelligence is no longer confined to predicting numbers or analyzing spreadsheets. With the advent of Stable Diffusion, a text-to-image deep learning model, AI has stepped into the world of art - turning written prompts into photorealistic or stylistically rich images. This shift raises both excitement and difficult questions: Can a machine truly “create art”? What happens to the role of human artists? And who owns the copyright of an AI-generated image?

1. How Stable Diffusion Works

At its core, Stable Diffusion belongs to a family of models called diffusion models. The principle is deceptively simple:

- Take an image and gradually add random Gaussian noise until it becomes unrecognizable.

- Train a neural network to reverse this process - to denoise step by step.

- Guide the denoising using a text embedding so the output matches your prompt.

\( q(x_t \mid x_{t-1}) = \mathcal{N}(x_t; \sqrt{1-\beta_t}\,x_{t-1}, \beta_t I) \)

Here, \(x_t\) is the noisy image at step \(t\), and \(\beta_t\) controls how much noise is added. The network learns to predict the noise and subtract it, eventually producing a clean, coherent image.

Stable Diffusion architecture combines three main blocks:

- Variational Autoencoder (VAE): Compresses images into a smaller “latent space,” reducing compute needs.

- U-Net: Learns to denoise the latent image at each step.

- Text Encoder: Often CLIP, which transforms a sentence like “a cat wearing sunglasses” into a vector that guides generation.

But what makes Stable Diffusion truly "stable" and efficient enough to run on consumer GPUs? The secret lies in the word Latent. Instead of performing the noise-addition and denoising process in high-dimensional pixel space (which requires massive computational power and memory), Stable Diffusion compresses images into a lower-dimensional latent space using the VAE encoder. For a 512x512 image, the latent representation is typically 64x64. The diffusion process occurs entirely in this compressed space. Once the latent representation is fully denoised, the VAE decoder reconstructs it back into pixel space. This breakthrough, known as Latent Diffusion Models (LDMs), drastically reduces training costs and inference time while preserving high-frequency visual details.

Another critical mechanism is Classifier-Free Guidance (CFG). When you type a prompt, how does the model know whether to listen closely to your text or follow its own creative intuition? During training, the text conditioning is sometimes randomly dropped (replaced with an empty prompt). During generation, the model predicts the noise twice: once with your prompt, and once without it. By extrapolating the difference between these two predictions, CFG forces the generation to move further away from generic images and closer to your specific text. A high CFG scale strictly adheres to your prompt but can cause visual artifacts, while a low CFG scale yields more artistic freedom.

2. Why It Matters

Stable Diffusion is more than a novelty. It has:

- Lowered the barrier of entry: Anyone with a GPU or cloud access can generate images in seconds.

- Expanded creativity: Designers use it to brainstorm concepts, game studios to create assets, and educators for illustrations.

- Accelerated iteration: Artists can test multiple styles and directions in hours instead of weeks.

This democratization mirrors what Photoshop did decades ago - but on steroids. Still, such power is not without risks.

3. Biases and Limitations

Because Stable Diffusion was trained on the massive LAION dataset, it reflects the same biases found on the internet. For example:

- “CEO” often generates images of men in suits.

- “Nurse” is more likely to return women.

- Underrepresented cultures or styles are harder to generate accurately.

These patterns show that AI is not neutral - it amplifies what it has seen. Mitigating bias requires better-curated datasets, balanced training, and responsible use of prompts.

4. Ethical and Legal Challenges

The most heated debate concerns ownership. Many artists argue their works were scraped without consent. Does AI art infringe on copyrights if it mimics a particular style? Courts are still debating this. Some companies now allow artists to opt out of future training sets, but critics argue it should be opt-in only.

Another concern is misuse. AI art can be used to create deepfakes, disinformation, or inappropriate content. This forces platforms and governments to consider regulations that balance innovation with safety.

5. A Hands-On Demo

Generating images with Stable Diffusion is surprisingly easy.

Here’s a minimal Python example using Hugging Face’s diffusers:

from diffusers import StableDiffusionPipeline

import torch

# Load pre-trained pipeline

model_id = "CompVis/stable-diffusion-v1-4"

pipe = StableDiffusionPipeline.from_pretrained(model_id, torch_dtype=torch.float16)

pipe = pipe.to("cuda")

# Your creative prompt

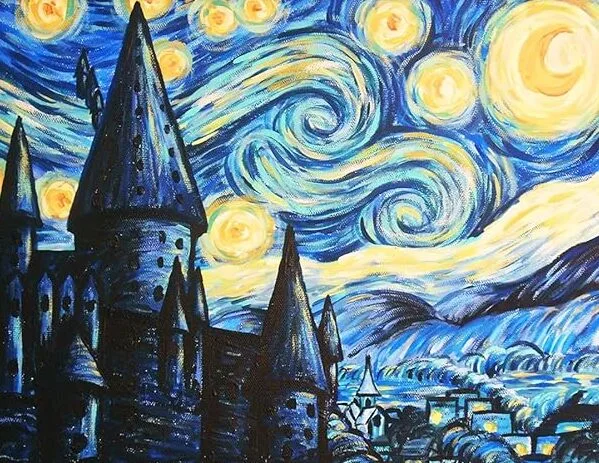

prompt = "A medieval castle floating on clouds, painted in Van Gogh style"

image = pipe(prompt).images[0]

image.save("castle.png")

6. From Stable Diffusion to FLUX: The 2023–2025 Generation Leap

In the two years following this article’s original publication, the open-source image generation ecosystem underwent a dramatic transformation. Here is what changed:

Stable Diffusion XL (SDXL) - July 2023

Stability AI released SDXL, a two-stage model (base + refiner) that produces 1024×1024 images natively. SDXL introduced a larger UNet, additional conditioning on image size and crop parameters, and an ensemble pipeline. The result was dramatically sharper details, better text rendering, and improved composition compared to SD 1.x and 2.x - all while remaining fully open-weight.

Stable Diffusion 3 & 3.5 - 2024

Stable Diffusion 3 (February 2024, later SD3.5 in October 2024) replaced the UNet backbone with a Multimodal Diffusion Transformer (MMDiT). This architecture processes text and image tokens in the same attention layers, enabling much richer text–image alignment. SD3 finally solved one of the field’s most persistent problems: legible, accurate text rendering within generated images, a task where earlier diffusion models consistently failed.

FLUX.1 - August 2024

FLUX.1, released by Black Forest Labs (the team of original Stable Diffusion researchers), became the new community benchmark. It uses a hybrid architecture combining Transformer blocks and a flow-matching training objective (as opposed to DDPM-style diffusion), producing images with exceptional photorealism, human anatomy accuracy, and prompt adherence. FLUX.1 [dev] (open-weight) and FLUX.1 [schnell] (4-step fast variant, Apache 2.0 licence) became the go-to models for local generation by late 2024.

Here is how to generate an image with FLUX.1 [schnell] using the Diffusers library:

# pip install diffusers transformers accelerate torch

from diffusers import FluxPipeline

import torch

pipe = FluxPipeline.from_pretrained(

"black-forest-labs/FLUX.1-schnell",

torch_dtype=torch.bfloat16

)

pipe.enable_model_cpu_offload() # saves VRAM

prompt = "A medieval castle floating on clouds, dramatic lighting, hyperrealistic"

image = pipe(

prompt,

guidance_scale=0.0, # schnell is guidance-distilled

num_inference_steps=4,

height=1024, width=1024

).images[0]

image.save("castle_flux.png")The Competitive Landscape in 2025

| Model | Release | Licence | Strengths |

|---|---|---|---|

| FLUX.1 [dev/schnell] | Aug 2024 | Open-weight / Apache 2.0 | Best open-source photorealism; accurate anatomy; fast (4 steps) |

| Stable Diffusion 3.5 | Oct 2024 | Stability AI Community | MMDiT architecture; excellent text rendering; multiple sizes (2B / 8B) |

| Midjourney v6.1 | 2024 | Proprietary (closed) | Best aesthetic coherence; editorial quality; popular among artists |

| DALL-E 3 / GPT-4o | 2023 / 2024 | API (OpenAI) | Safety-focused; accurate prompt following; integrated in ChatGPT |

| Adobe Firefly 3 | 2024 | Proprietary (Adobe) | Commercially safe (trained on licensed data); Photoshop integration |

7. Looking Ahead: Ethical and Social Considerations

Stable Diffusion has opened the floodgates of AI-generated creativity. But the road ahead depends on three things:

- Ethical datasets: Respecting artists’ rights and cultural diversity. Ongoing lawsuits (e.g., Getty Images v. Stability AI) are shaping how training data must be licensed in future models.

- Transparency: Clear disclosure when images are AI-generated. The EU AI Act (effective 2026) requires AI-generated content to be watermarked or labelled. C2PA (Content Credentials) is becoming the industry standard for provenance metadata.

- Balance: Positioning AI as a co-creator, not a replacement for human imagination. Models like Adobe Firefly show a viable path: trained exclusively on licensed content, with revenue-sharing for contributing artists.

Just as photography once transformed art, AI image generation is reshaping creative industries. The key question is not whether AI can create art, but how we as a society choose to integrate it responsibly.

8. Conclusion

Stable Diffusion represents a pivotal moment in the intersection of artificial intelligence and human creativity. By making high-quality image generation accessible to anyone with a computer, it has fundamentally altered the creative landscape. The technology's open-source nature has fostered a vibrant community of developers, artists, and researchers who continue to push the boundaries of what is possible.

However, this rapid progress demands equally rapid development of ethical guidelines, legal frameworks, and community standards. The challenge ahead is not purely technical - it is social, cultural, and political. How we navigate questions of attribution, consent, and artistic authenticity will define whether generative AI becomes a liberating tool or a disruptive force.

What is clear is that Stable Diffusion and its successors are here to stay. The most productive path forward lies in treating AI as a collaborator rather than a competitor - a powerful brush in the hands of human imagination.

Comments